The Division of Arts and Machine Creativity (AMC) at the Hong Kong University of Science and Technology (HKUST) is delighted to share its active participation at the ACM Conference on Human Factors in Computing Systems (CHI) 2026, the world’s premier forum for human–computer interaction (HCI) research, held recently in Barcelona, Spain from 13–17 Apr, 2026.

This year, AMC faculty continue to advance the frontiers of human-centered AI, creativity support tools, interactive systems, and computational design, with 5 high-quality research papers accepted to CHI 2026. These achievements highlight AMC’s commitment to impactful, interdisciplinary scholarship at the intersection of technology, design and human experience.

Our warmest congratulations go to Prof. Hongbo FU, Prof. Anyi RAO and Prof. Hai-Ning LIANG, along with their collaborators, for their outstanding contributions. Their work reflects AMC’s continued leadership in shaping the future of interactive technologies and creativity computing through rigorous research and thoughtful design.

Accepted Research Papers

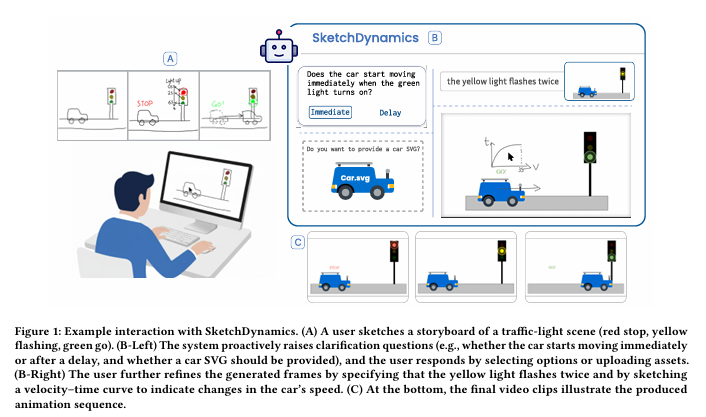

- SketchDynamics: Exploring Free-Form Sketches for Dynamic Intent Expression in Animation Generation

Boyu Li, Lin-Ping Yuan, Zeyu Wang, Hongbo Fu

Proposes an interactive sketch‑based animation authoring approach that leverages free‑form storyboards, vision–language model interpretation, and adaptive clarification to translate dynamic intent into generated animations, enabling efficient and expressive motion creation with minimal input. - Collaposer: Transforming Photo Collections into Visual Assets for Storytelling with Collages

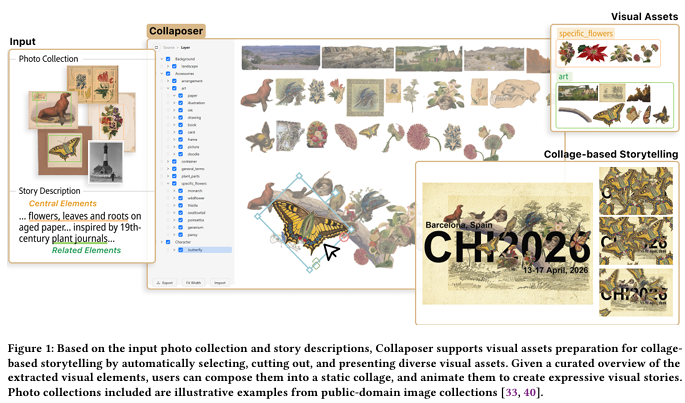

Jiayi Zhou, Liwenhan Xie, Jiaju Ma, Zheng Wei, Huamin Qu, Anyi Rao

Introduces a story‑driven collage authoring system that automatically selects, segments, and organizes visual elements from photo collections using semantic inference and large language models, streamlining asset preparation for expressive visual storytelling. - Improving Steering Law Throughput Calculation by Defining Effective Parameters in 3D Virtual Environment

Mohammadreza Amini, Wolfgang Stuerzlinger, Shota Yamanaka, Hai-Ning Liang, Anil Ufuk Batmaz

Proposes a bivariate trajectory‑based effective throughput formulation for 3D steering in VR, using bivariate effective width and effective amplitude to better capture real movement variability, yielding more stable throughput values and improved Steering Law model fits across speed–accuracy trade‑offs. - Overcoming Translation Delays: Towards Better Subtitle Design for Foreign Language Conversations in Extended Reality

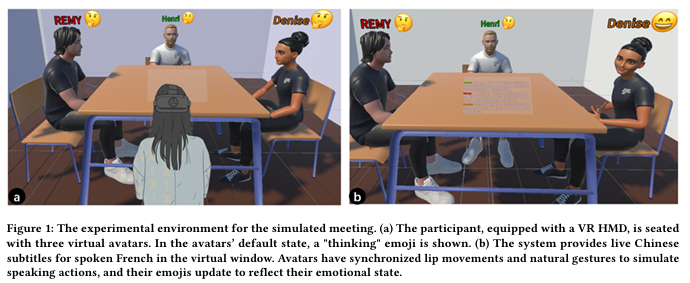

Ziming Li, Rongkai Shi, Hongji Li, Jialin Wang, Pan Hui, Hai-Ning Liang

Investigates how translation latency impacts multilingual communication in XR, and introduces speaker‑anchored subtitle designs—especially a merged‑context interface—that mitigate delay effects, improving emotion attribution, co‑presence, and overall user experience despite unavoidable translation delays. - Investigating How Physical Surfaces Can Serve as Common-Region Cues for Perceptual Grouping of Virtual Elements in Augmented Reality

Xuanhui Yang, Xuning Hu, Hai-Ning Liang, Xiaojuan Ma

Explores how physical surfaces act as common‑region cues for perceptual grouping in AR, showing that surface‑based cues significantly influence grouping in 3D and that conflicts between physical common‑region cues and virtual proximity cues reduce grouping clarity, informing design guidelines for environment‑aware AR interfaces.